World Models Are Not Only About Predicting the Future: From Cognitive Maps to Abstract Structure

Learning the reusable structures hidden behind continuous experience

Introduction

In recent years, world models have become a central concept in embodied intelligence and self-supervised video generation. Broadly speaking, a world model aims to learn how an environment evolves over time: given a current state and some action or change signal, the model predicts what will happen next. In many discussions, world models are often understood as models that “predict future images.” For example, given the first few frames of a video, the model generates subsequent frames; given a current observation and an action, it predicts the next state. This is certainly an important function of world models. Yet if world models are reduced to video prediction or image generation, a deeper issue is easily missed. The real value of a world model does not lie merely in generating what the next frame looks like, but in learning the reusable structures hidden behind continuous experience.

Humans do not understand the world by memorizing countless static images. Instead, they abstract stable internal structures from experience: up and down, left and right, near and far in space; object rotation and translation; grasping, pushing, and pulling between hands and objects; forward motion and turning of a camera in an environment. These structures are not properties of a particular scene. They are patterns of change that can be reused across scenes, objects, and tasks.

Therefore, the central question of world modeling can be reformulated as follows:

Can an intelligent system learn reusable abstract structures from continuous observations, and use such structures to predict the future, transfer to new scenes, and support downstream planning and control?

From this perspective, the two works introduced in this article, both recently accepted by ICML 2026, form a clear research trajectory:

- Structure Abstraction and Generalization in a Hippocampus-Entorhinal Inspired World Model: when action labels are unavailable, how can reusable structures be abstracted from continuous videos?

- DiLA: Disentangled Latent Action World Models: when latent actions must support both abstraction and prediction, how can content-structure disentanglement resolve this tension?

Together, these two works support the following view: the key to world models is not prediction alone, but the learning, organization, and use of structure. A genuinely useful world model should not merely be a future-frame generator; it should be a structured latent dynamical system.

Abstract Structure

We first need to clarify what is meant by abstract structure. A simple example is useful. Imagine a person arriving in an unfamiliar city for the first time. Initially, they only see local streets, buildings, intersections, and landmarks. Each momentary visual input is local and incomplete. As they continue walking and exploring, however, they gradually learn which buildings are adjacent, which road leads to the square, and which turn brings them back to a previously encountered location. This process is not simply the memorization of every visual frame. What the brain constructs is an internal spatial structure: relations among current location, orientation, distance, routes, and landmarks. This structure can be understood as a two-dimensional cognitive map. More importantly, it is reusable. When the same person enters another city, they do not need to relearn basic structural relations such as “left and right,” “forward and backward,” “turning,” or “near and far.” New visual content can be bound to an already familiar spatial structure, allowing the person to understand the new environment quickly. This suggests that many forms of human generalization arise not from memorizing particular scenes, but from learning and reusing abstract structures.

Two-dimensional spatial navigation is only the most intuitive example. More generally, many changes in the world can be understood as forms of abstract structure:

- the translation, rotation, and scaling of an object are geometric structures in the visual world;

- grasping, pushing, pulling, and lifting are dynamical structures in interactive environments;

- forward motion, turning, and detouring of a camera are motion structures in navigation;

- even higher-level conceptual transfer ultimately depends on the reuse of some relational structure.

What these structures share is that they do not depend on the color, texture, identity, or background of a specific object. Rotation is not a property unique to a particular apple; grasping is not an action unique to a particular hand. Structure describes how states change, rather than how states look. Abstract structure can therefore be understood as a compressed representation of spatiotemporal regularities. It is not a static image feature, but a pattern of change extracted from continuous sequences.

In computational neuroscience, cognitive map theory

Within this framework, classical cognitive map models such as TEM

- HPC binds content and structure: it binds concrete episodes, visual details, contextual information, and individual experiences;

- MEC encodes abstract structure: it represents more abstract, regular, and reusable relational structures, especially those related to path integration, positional change, and continuous transitions.

The interaction between HPC and MEC allows episode-specific content to be bound with abstract structure. By performing path integration in MEC to predict subsequent states, this circuit can be regarded as a biological implementation of a world model. At a higher level, the inspiration provided by the HPC-MEC circuit is not merely about “spatial navigation,” but about a more general principle:

Abstract structure is not a geometric description of static space, but a joint compression of spatiotemporal regularities.

This definition is important because it directly connects abstract structure learning with world model learning. If visual sequences already contain all the information needed to infer spatiotemporal change, then the most natural test of whether a model has learned structure is whether it can use that structure to predict future states. In other words, prediction is not the final goal; it is a test of whether structure learning has succeeded. In this sense, a world model is no longer merely a system for generating future images, but a structure learner: it seeks to extract low-dimensional regularities that determine future changes from continuous observations, and then uses prediction errors to validate whether those regularities are effective. This moves our research objective toward using world models to learn reusable spatiotemporal structures.

In AI, world models can be broadly divided into several categories:

- 3D reconstruction and scene representation, whose goal is to recover the geometric structure of the environment;

- video generation, whose goal is to generate visually continuous and realistic future frames;

- action-conditioned forward dynamics models, whose goal is to learn how states change under actions, thereby supporting control and planning.

Our focus is a special form of the third category: latent world models, in the spirit of JEPA

However, latent world models also face an important problem: the latent space often has to serve two roles at once. On one hand, it must be sufficiently abstract to support transition learning. On the other hand, it must preserve enough content information to generate the next frame. In other words, latent world models do not explicitly learn an abstract structure that is independent of scene information, because the latent dynamics they learn remain entangled with substantial content information. Once the model is transferred to new objects or new backgrounds, the learned transition regularities may become difficult to reuse.

The HPC-MEC World Model

The functional distinction suggested by the HPC-MEC circuit provides a useful direction: a hierarchical world model can separate content-rich representations from structural representations. However, both cognitive map models and latent world models typically assume that the action $a_t$ is known. Robot datasets contain control commands; game environments provide discrete key presses; navigation tasks provide velocity and direction. Yet if the goal is to learn abstract structure, this assumption introduces a hidden trap: once the action space is predefined, the internal structure has already been partially fixed.

For example:

- if actions are defined as “up, down, left, and right,” the model is given a discrete grid structure;

- if actions are defined as continuous velocity and direction, the model is given a continuous two-dimensional motion manifold;

- if actions are defined as robotic joint angles, the model is given a control structure in the robot’s embodiment-specific coordinate system.

In these cases, the model is not spontaneously learning abstract structure from observations; rather, it is learning latent state transitions on top of already specified action semantics. When structure learning depends on the definition of the action space itself, misalignment across different action spaces can hinder structural generalization. Put differently, learning abstract structure is deeply coupled with learning the action space. If the action space is manually specified, then what is called “structure learning” may already be partly determined by the action definition.

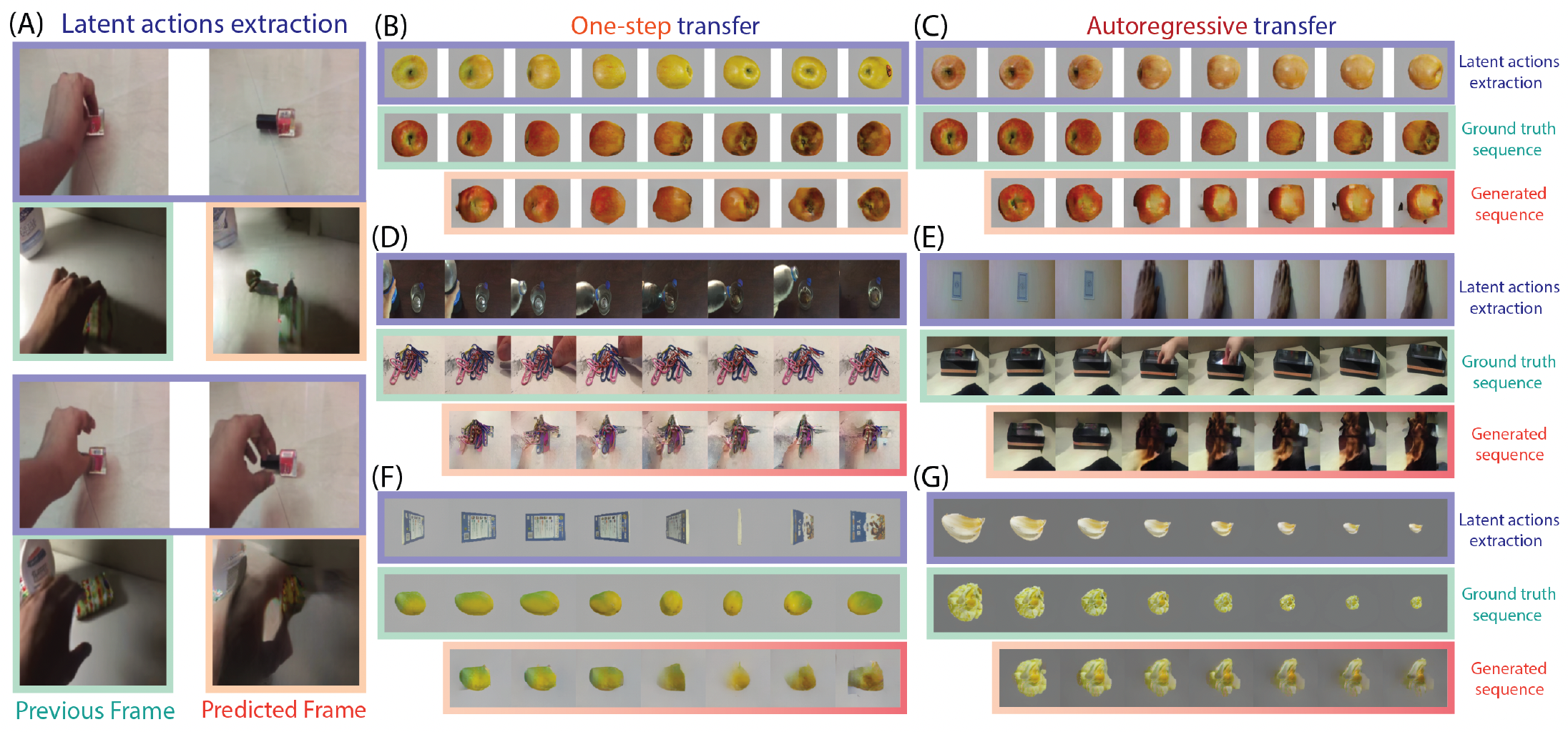

The work Structure Abstraction and Generalization in a Hippocampus-Entorhinal Inspired World Model

How can a world model simultaneously learn concrete visual content and abstract dynamic structure from videos without action labels, and transfer the learned structure to new objects and environments?

The paper proposes a hierarchical world model inspired by the hippocampal-entorhinal circuit. It extracts reusable latent transitions from continuous visual experience and uses path integration to support future prediction and structural generalization.

Model Design

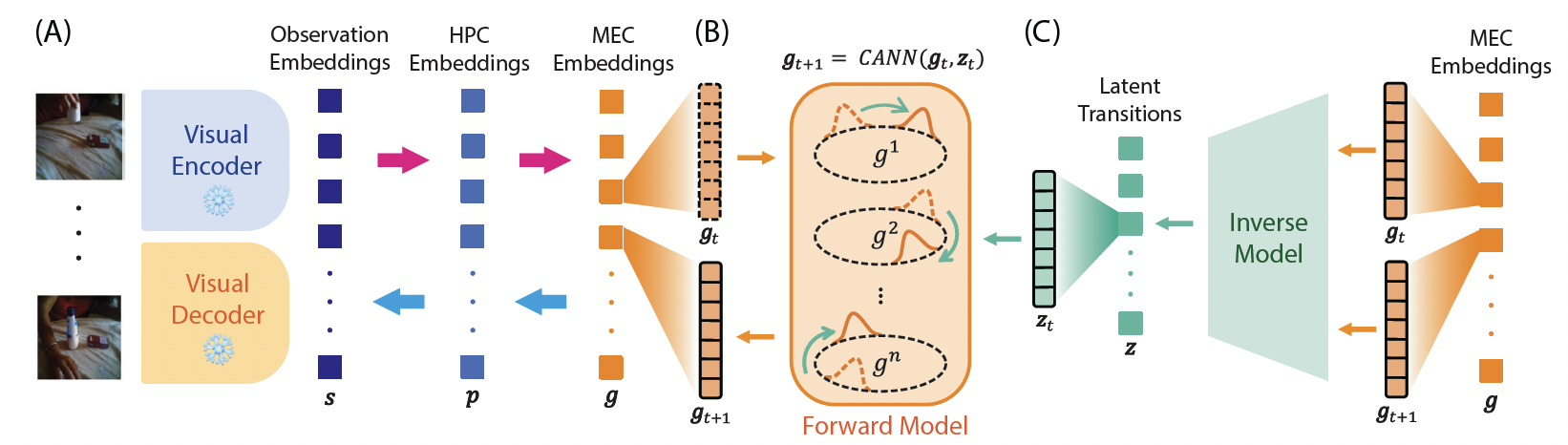

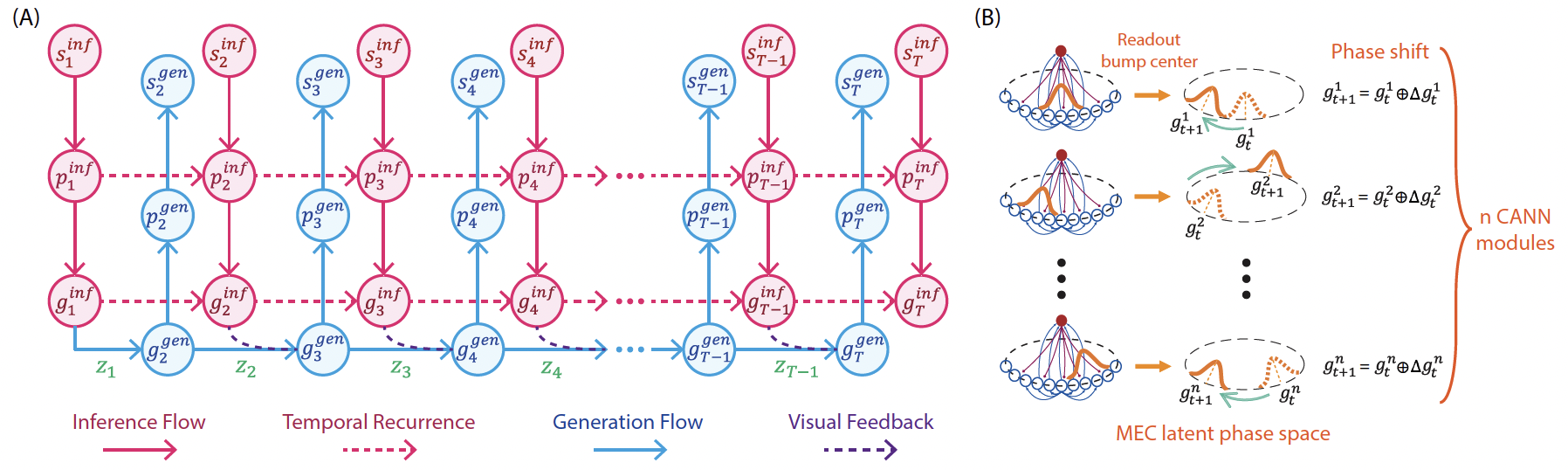

The model consists of two main components: an HPC-MEC coupling model and an inverse dynamics model (IDM). It first uses a pretrained multi-scale VQ-VAE to extract visual representations, then obtains HPC and MEC representations through a hierarchical architecture. The IDM infers latent transitions from differences between consecutive MEC representations.

The key design is not simply to construct an encoder-decoder, but to create two functionally distinct information flows.

The first is the visual inference flow. Input video frames are encoded into visual representations, passed into the HPC to form content-rich representations with historical information, and then compressed into the MEC to produce lower-dimensional representations biased toward structure.

The second is the generation flow. The model does not directly predict the next frame. Instead, it uses a latent transition in MEC space to advance the current state to the next state, and then reconstructs future visual representations through the generative pathway. This process is inspired by path integration in MEC grid cells. The latent transition can be understood as an “abstract velocity” or “change operator.” It does not describe how pixels change; it describes how the latent structure should move.

The core mechanism of the MEC is based on a Continuous Attractor Neural Network (CANN). In neuroscience, CANNs are often used to explain how grid cells maintain a stable activity bump in continuous space and perform path integration driven by velocity inputs. This idea is introduced into the design of the MEC: the MEC representation denotes the current state of the abstract structure, while the latent transition acts as a velocity-like input that drives structural change. A forward function maps the current MEC state and the latent transition into a phase displacement, which then shifts the MEC state to obtain the structural state at the next time step.

Structure Abstraction

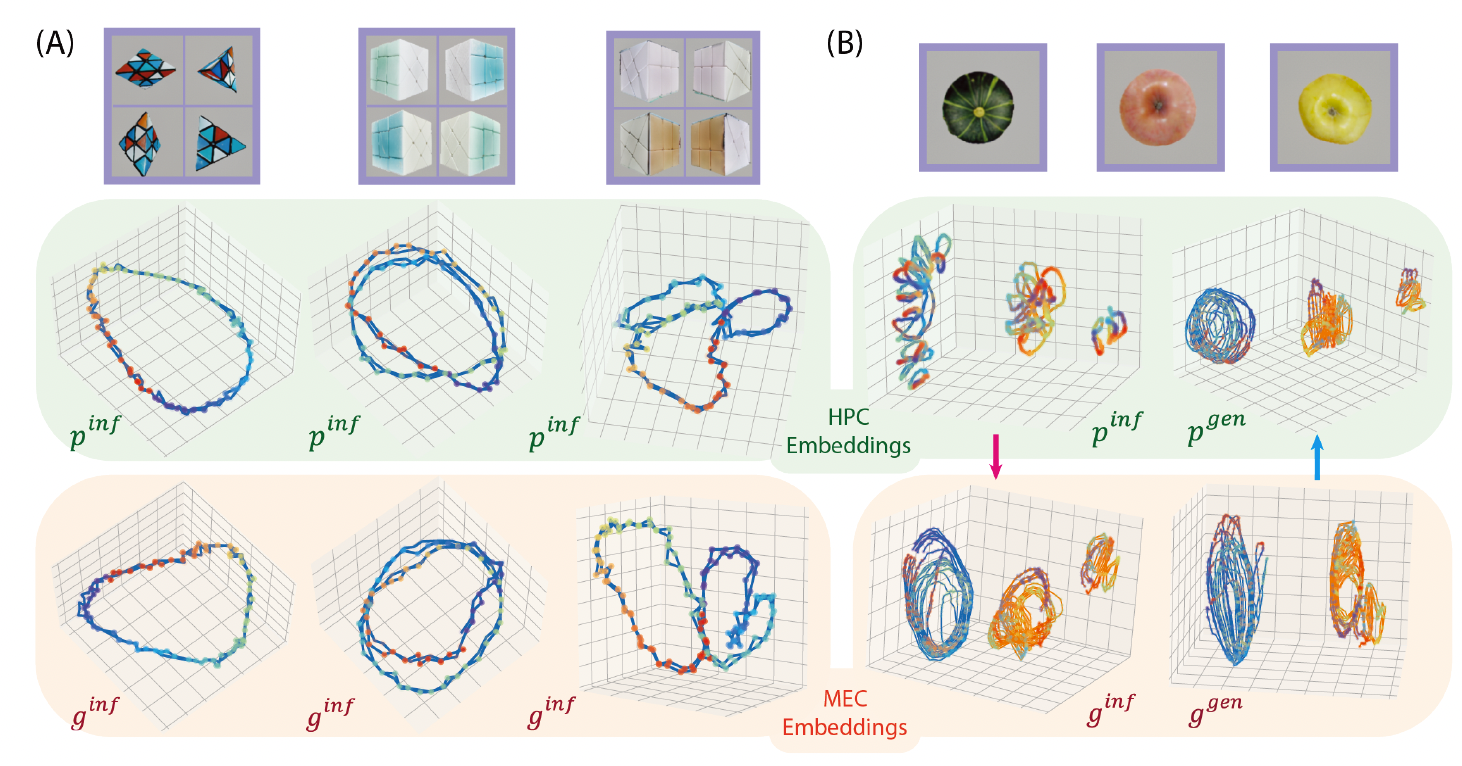

To test whether the model has learned abstract structure, the HPC-MEC World Model is trained on the large-scale human activity dataset Something-Something V2 without action labels, and then evaluated on unseen 3D object rotation data. Rotation is an especially suitable task for studying structural abstraction because it has clear periodicity: some objects return to their original appearance only after a full 360-degree rotation, while symmetric objects may exhibit repeated appearances after 180 or 90 degrees.

The results show that both HPC and MEC representation spaces exhibit periodic trajectories, but the MEC representations form clearer shared rotational structures. Furthermore, within object categories, such as different pumpkins, red apples, and yellow apples, HPC representations more readily distinguish individual instances, whereas MEC representations tend to overlap and capture category-level shared rotational structures.

This suggests that the model is not merely memorizing the rotation video of a particular object, but forming a more abstract structural trajectory in representation space.

Structural Generalization

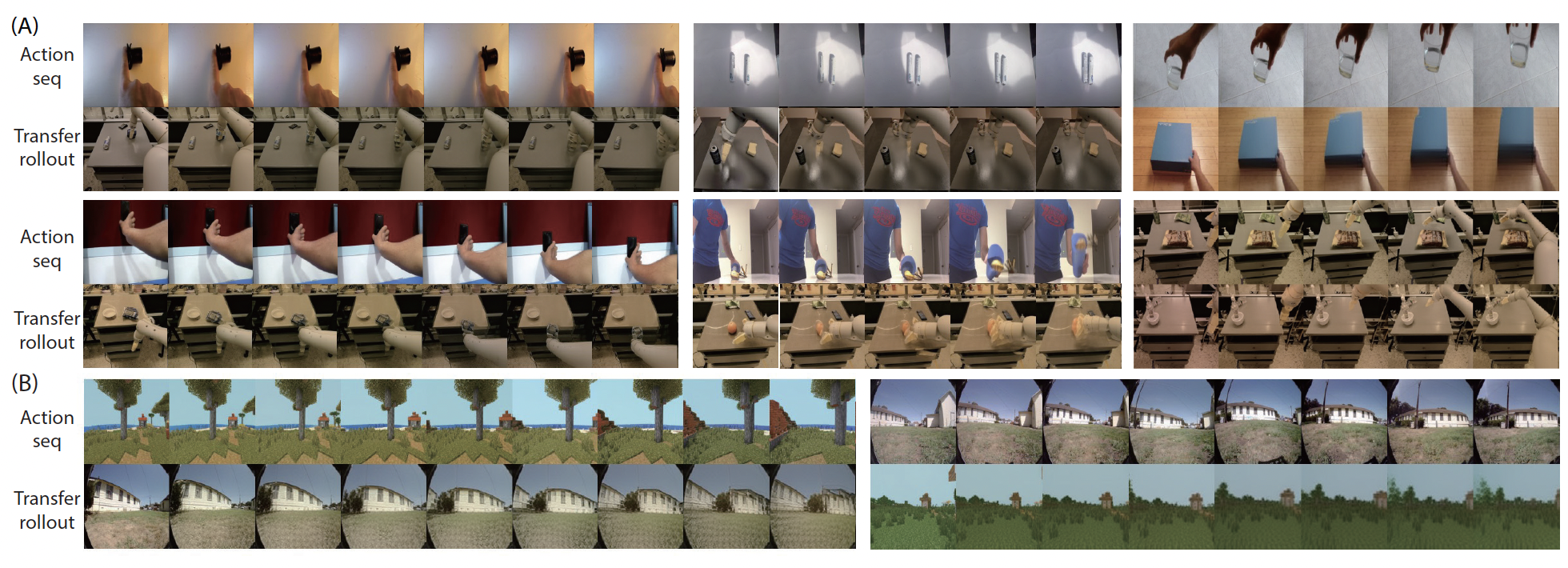

Another way to test structural abstraction is to evaluate generalization. The model extracts a latent transition from one video and applies it to the initial state of another object or scene. If the latent transition truly represents an abstract structural change, it should be transferable. For example, a rotational structure extracted from one object should be applicable to another object, causing the target object to rotate in a similar manner.

More broadly, the model is trained on real human activity videos such as Something-Something V2 and evaluated on multiple simulated object and robot manipulation scenarios for structural reuse and out-of-distribution generalization. This indicates that even without action labels, a world model can abstract transferable latent transitions from continuous visual experience.

Ablation Studies

To verify the necessity of the model design, the paper compares several ablated variants:

- If a single-layer representation space is used, placing content and structure in the same space, the model is more prone to content leakage during structural reuse. That is, texture information from the source video leaks into the generated target sequence. This indicates that without content-structure separation, the internal structure extracted from a sequence is difficult to keep as pure motion semantics.

- If the CANN-inspired dynamics are removed and a standard state-action concatenation MLP is used instead of the path integration mechanism, the model also performs worse on out-of-distribution structure transfer tasks, suggesting that CANN dynamics contribute to structural generalization.

Summary

This work shifts the focus of world models from “predicting the next frame” to “learning reusable structure.” Through an HPC-MEC-inspired hierarchical design, the model separates content-rich episodic representations from abstract dynamic structure. Through CANN-inspired path integration, latent transitions advance structural states in MEC representations. Through cross-object and cross-scene transfer, the model demonstrates the ability to reuse and generalize structure.

DiLA: Disentangled Latent Action World Models

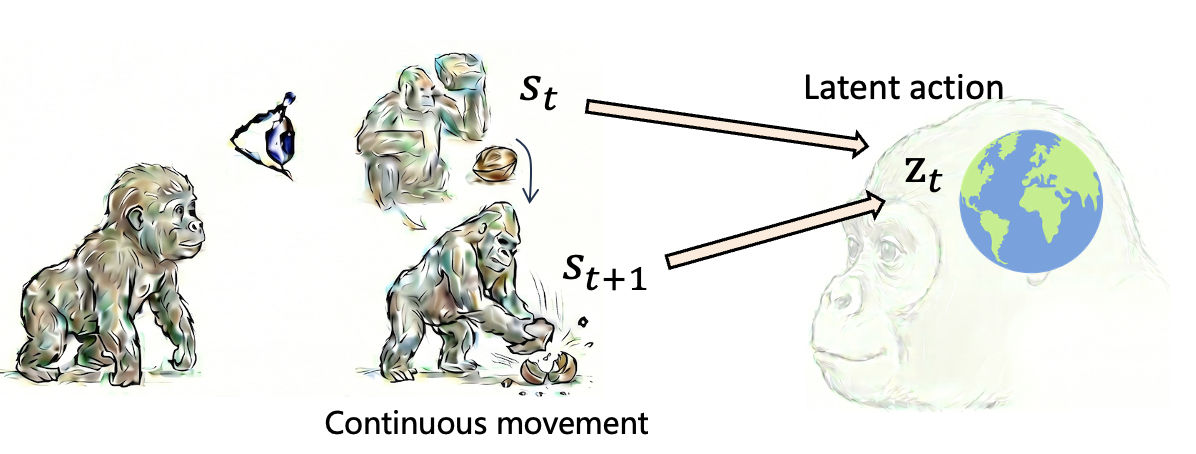

In AI, world models have largely relied on datasets with action labels. Compared with massive and easily accessible unlabeled video data, action-labeled data are scarce and costly to scale. To bridge this gap, Latent Action Models (LAMs)

- Inverse Dynamics Model (IDM) It infers a latent action $z_t$ from consecutive states $s_t, s_{t+1}$, and uses an information bottleneck to encourage $z_t$ to encode motion information.

- Forward Dynamics Model (FDM) It predicts the next state $s_{t+1}$ from the current state $s_t$ and the latent action $z_t$.

In this way, the model no longer needs externally provided action labels. Instead, it self-supervisedly learns “what change caused the next state” from observation sequences. From the perspective of structure learning, latent actions can be understood as abstract latent transition representations. They do not describe the image content itself, but the way one state changes into the next. In other words, latent actions are a computational expression of abstract structure in world models.

This makes LAMs an attractive framework for learning abstract structure. A large amount of real-world video data has no action labels: internet videos, human activity videos, first-person navigation videos, and cross-embodiment interaction videos usually provide only continuous observation sequences. These data contain rich internal structure, and LAMs provide a self-supervised route for extracting such structure from videos.

However, LAM training faces a central tension. If the latent action is compressed enough to become abstract, it becomes easier to transfer, but may lose the accuracy needed to predict the next state and the details required for generation. If the latent action retains enough information to improve generation quality, it can easily mix in content information such as color, texture, and background, making the action less abstract and harder to transfer. We call this problem the LAM Trade-off: the tension between action abstraction and prediction accuracy.

Most existing methods impose strong predictive bottlenecks to make action representations transferable and abstract, such as Vector Quantization (VQ)

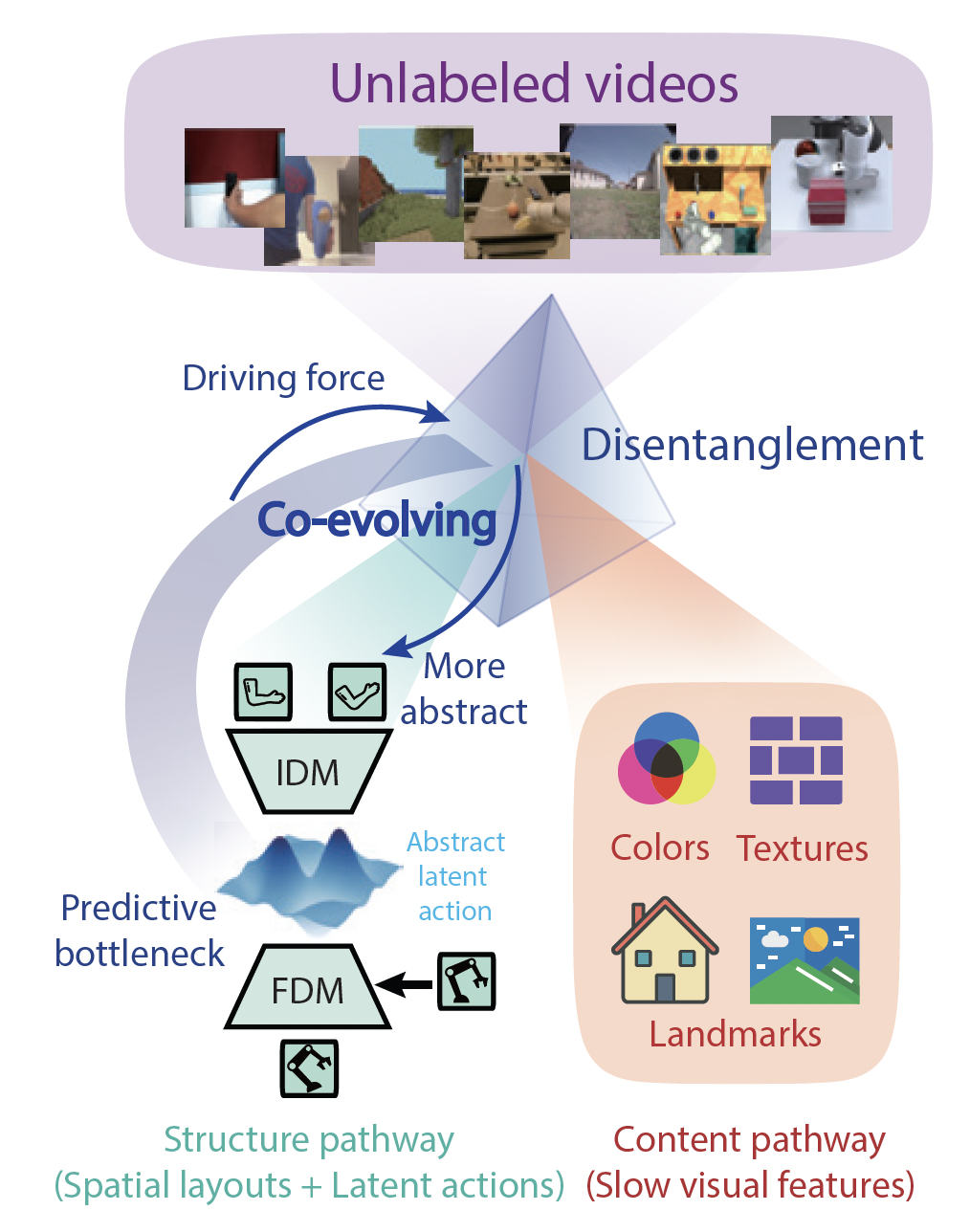

Core Idea

The work DiLA: Disentangled Latent Action World Models

- The structure pathway models motion-related spatial structure, such as position, shape, layout, and dynamic change. The latent action only needs to predict changes in the structure representation, rather than the full visual feature.

- The content pathway preserves visual details, such as color, texture, background, object appearance, and historical information.

DiLA does not learn latent actions from full visual representations. Instead, it forces latent actions to predict only the evolution of structure representations. In this way, latent actions are compelled to focus on structural change, while high-entropy visual details are moved to the content pathway. This further promotes disentanglement: to minimize structure prediction error, the model is encouraged to encode dynamics-related motion information into the latent action while transferring static visual details to an independent content pathway. At the same time, the predictive information bottleneck required for learning latent actions acts as the driving force that retains only dynamics-related features in the structure pathway and separates appearance-related features that do not change over time. Thus, disentanglement emerges during the process of latent action learning. In turn, better content-structure disentanglement makes it easier for the LAM to learn an abstract, continuous, and transferable latent action space. The two processes form a co-evolving relationship.

Model Architecture

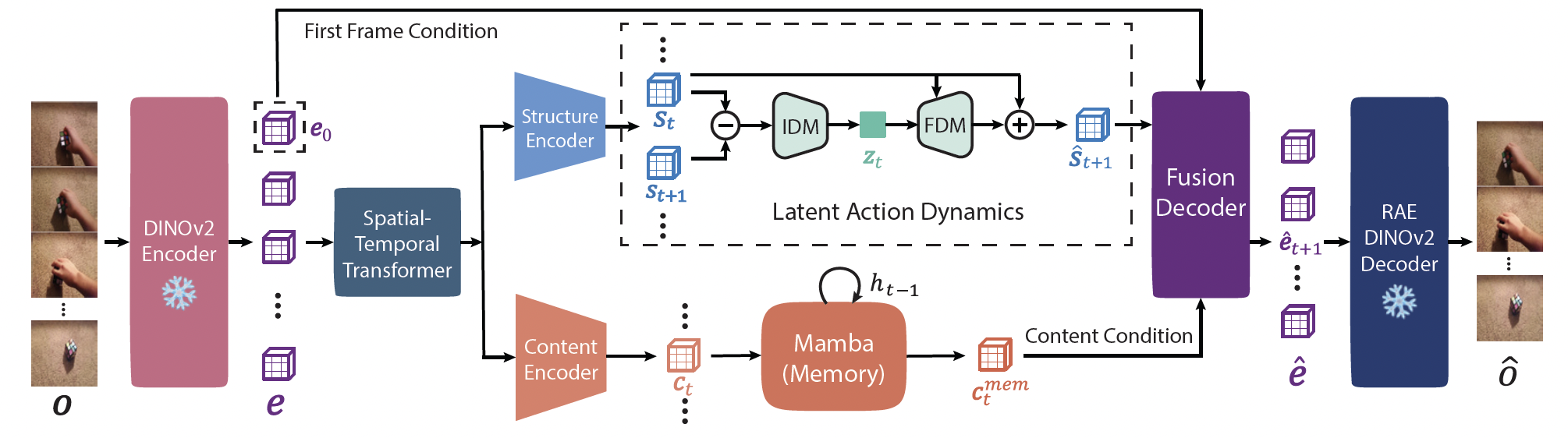

The overall architecture of DiLA can be summarized in three parts.

First, input videos are processed by a DINOv2 encoder and a Spatial-Temporal Transformer to obtain visual representations. These representations are then routed into different pathways:

- In the structure pathway, the model first compresses the representation into a structure embedding. The IDM then infers latent actions from differences between consecutive structure embeddings. The FDM predicts the next structure embedding from the current structure embedding and the latent action. This process makes the latent action primarily encode “how structure changes,” rather than how the full image changes.

- In the content pathway, the model uses Mamba as a memory module to aggregate historical content information. This design is analogous to slow feature analysis: it focuses on relatively stable visual content rather than rapidly changing dynamic structure.

Finally, the Fusion Decoder combines the predicted structure representation, the content memory representation, and initial-frame information to reconstruct the future visual embedding. That is, DiLA completes future prediction through:

predicted structure + remembered content + initial-frame detail

→ future visual embedding

A particularly noteworthy design is a latent action regularization loss inspired by the perspective of Lie groups. It uses a cosine similarity objective to enforce that temporally reversed transitions produce opposite latent action vectors. This geometric constraint aligns the latent space with meaningful motion dynamics while suppressing random and irrelevant distractors.

Motion Transfer

One of the most important experiments in DiLA is action transfer. The model extracts latent actions from a source video and applies them to another target scene. If the latent actions have truly learned abstract action semantics, the generated result should preserve the content of the target scene while reusing the dynamics of the source video. The paper presents multiple transfer scenarios, including human-to-robot action transfer, semantic action transfer across different objects and viewpoints, human-to-human and robot-to-robot intra-domain transfer, and cross-scene transfer between simulated and real navigation environments. These results show that the latent actions learned by DiLA are not merely pixel differences or local optical flow, but dynamic representations with a degree of reusability across objects, embodiments, and environments.

Disentanglement

To verify whether structure and content are genuinely separated, the paper designs a rebinding experiment. Specifically, structure is extracted from one video and content from another video, and the two are recombined to generate a new sequence. The results show that the generated sequence inherits the spatial dynamics of the structure sequence while preserving the colors, textures, and appearance attributes of the content sequence.

The paper also conducts a control experiment: the structure representation is fixed, while only the memory module in the content pathway evolves over time. If the content pathway leaked motion information, the generated result should still move. However, the generated sequence remains static. This shows that the content memory module mainly encodes temporally stable content information rather than motion itself.

This experiment is crucial because it directly supports the core claim of DiLA:

Latent action learning promotes structure-content separation through a predictive information bottleneck, and structure-content separation further improves the abstraction of latent actions.

The Latent Action Manifold

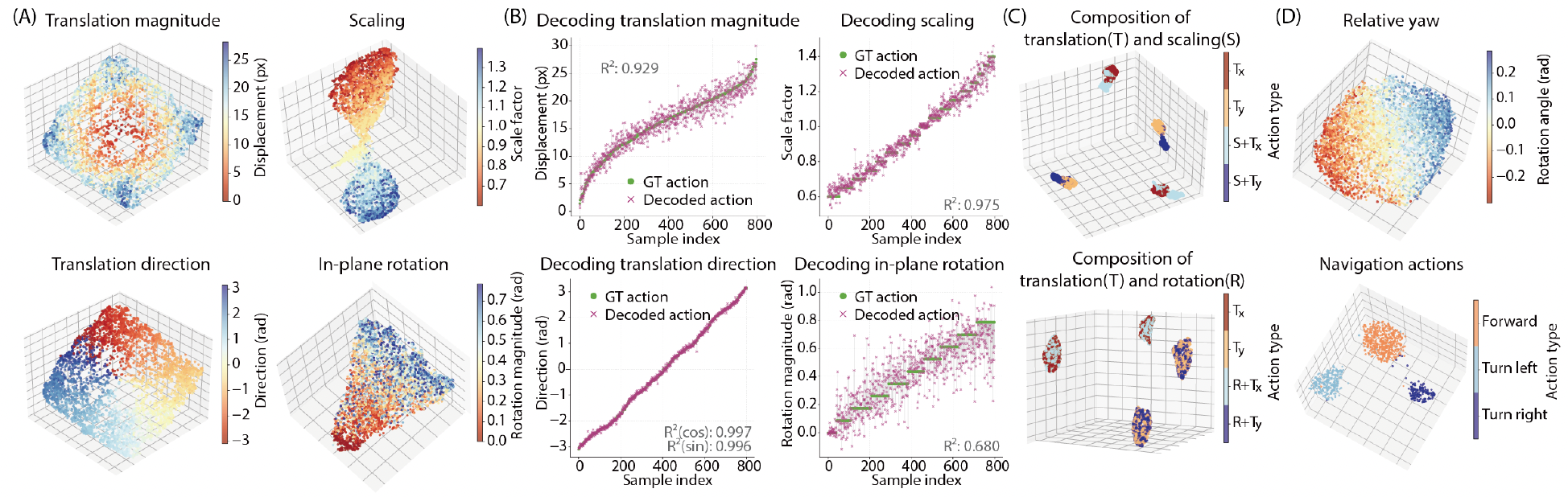

DiLA not only studies generation and transfer, but also analyzes the geometry of the latent action space. The paper constructs a controlled out-of-distribution benchmark using OmniObject3D, including primitive transformations such as translation, scaling, and rotation. UMAP visualizations show that different transformation types form continuous and interpretable manifolds in the latent action space. For example, translation forms a structure similar to a two-dimensional plane; scaling exhibits symmetry around the identity transformation; and rotation forms a continuous spectrum of rotation magnitudes. This indicates that the latent actions learned by DiLA are not discrete tokens, but action manifolds with continuous semantic structure. The paper further shows compositional actions, such as translation plus scaling, suggesting that the latent action space has a degree of compositionality.

In addition, the paper compares DiLA with methods such as LAPA, Moto, AdaWorld, and villa-X on generation, transfer, and action planning tasks, and validates the necessity of each module through ablation studies.

Summary

In this work, we propose DiLA. By using the predictive bottleneck as a driving force for content-structure disentanglement, DiLA effectively balances the trade-off between action abstraction and generation fidelity. Experimental results show that this mutually reinforcing process enables DiLA to learn continuous action manifolds without explicit supervision. The disentangled representation allows the model to perform robustly on challenging downstream tasks, including cross-embodiment action transfer and visual planning.

From World Models to Abstraction and General Intelligence

From the perspective of abstraction hierarchy, the lowest level consists of actions executed at each moment. One level higher are latent actions: unified action representations abstracted from visual information. They no longer depend on a particular robot, body structure, or low-level action space, but instead attempt to abstract “what kind of change has occurred in the world.” Above that are skill representations, which compress a sequence of actions over time and can be viewed as a temporal compression of abstract structure. At the highest level are language instructions. Language can compress complex visual, action, and temporal relations into an intention that can be invoked across different scenes and embodiments. It is therefore an abstract representation that highly compresses both time and space.

Within this framework, a natural question arises: can we start from purely visual information and, through bottom-up hierarchical abstraction, eventually learn concept structures that are as compressed, compositional, and transferable as language? From the perspective of human development, language may not be a symbolic system that appears from nowhere. Rather, it may be built on top of conceptual structures gradually formed through visual, motor, and interactive experience, and then further bound to symbols. It both helps individuals construct cognition about the world and supports communication, collaboration, and planning among individuals.

The learning paradigm described above can be summarized as learning from observations. World models learn how the world changes by observing expert data, internet videos, robot videos, or human behavior trajectories, and from these data they obtain abstract visual and action representations. Unified representations trained on large-scale human and robot data have already been shown to improve VLA models, World Action Models, and other forms of policy. Yet relying solely on observations is still insufficient. Observation-based learning depends on the quality, coverage, and distribution of offline datasets, which means that the learned representations remain constrained by existing data. In a sense, this also mirrors the foundation of LLM success: if human text data are sufficiently rich, a model can learn large amounts of knowledge and many reasoning patterns from them. But the bottleneck is equally clear: when the data space itself cannot be further optimized, the model’s generalization ability will be bounded by the data that already exist.

By contrast, what makes reinforcement learning especially compelling is that it does not merely learn from existing data. It can change the data it will see through action. In other words, the data distribution in RL is not fixed; it is actively shaped by the agent’s behavior policy. The agent can explore the environment, attempt and fail, create new state transitions, and discover behavioral patterns that never appeared in the original dataset through interaction with the world. This is particularly important because a truly general intelligent system should not merely reproduce what humans have already done. It should also be able to discover strategies, skills, and solutions that humans have not yet systematically explored.

Therefore, the next key step is to combine learning from observations with learning from actions, forming a broader paradigm of self-supervised reinforcement learning. On one hand, the world model learns compressible, predictable, and transferable abstract structures from observation and interaction. On the other hand, the policy actively explores, discovers skills, and performs goal-conditioned control, thereby continually creating new data distributions for the world model. Put differently, an agent must learn not only “how the world changes,” but also “how I can make the world change.”

Here, unsupervised RL and goal-conditioned RL provide two instructive perspectives. Unsupervised RL is not concerned with completing a particular externally specified task. Instead, in the absence of explicit task rewards, it seeks to discover rich actionable structures in the environment: which states are reachable, which changes are controllable, and which behavioral patterns lead to new experiences. Its goal is not to learn an optimal policy for a single task, but to acquire a skill repertoire that covers a broader behavioral space.

Goal-conditioned RL, in turn, can be regarded as an implicit world model. On the surface, a goal-conditioned policy learns a conditional policy: given a current state and a goal state, output the action that should be taken. At a deeper level, however, it implicitly encodes the reachability structure and transition dynamics of the world. It knows “which states can become which states,” and “through what behavior such changes can be achieved.”

Therefore, when comparing LLMs with self-supervised RL, a key difference emerges: the data space of LLMs is primarily static, and the model can only learn within the existing text distribution; the data space of self-supervised RL is dynamic, because the agent can change its future data distribution through its own behavior. This means that self-supervised RL is not merely “training a model on data,” but “training a behavioral system capable of generating better data.” In this closed loop, the world model compresses and predicts experience, the policy explores and expands experience, and intrinsic objectives determine which experiences should be prioritized for learning.

This may be one of the most important directions for future world model research. A world model should not be seen only as a module in model-based RL for fitting transition dynamics, nor only as a future-frame predictor in video generation. More broadly, it should become the core structure connecting observation, action, and goal. It learns the statistical regularities of the world from observations, the controllable structures of the world from actions, and the reachability relations among states from goal-conditioned behavior. Ultimately, it forms an abstract representation that can both understand the world and actively change it.